MLWhiz Weekly AI/ML/Recsys Newsletter # 5

The week the AI industry's partnership era ended — and the consulting era began.

Story of the Week: The Great Unbundling

The Microsoft–OpenAI partnership came apart Sunday night. Revenue sharing, the AGI clause, and exclusivity all dissolved in a single restructuring.

Twenty-four hours later, Sam Altman and AWS CEO Matt Garman were on Stratechery announcing OpenAI models on Amazon Bedrock. The speed tells you the deal was pre-negotiated; only the Microsoft contract was holding it back.

The practical change for ML teams: GPT models now run natively on Bedrock alongside Claude, Llama, and Mistral. If you standardized on AWS but routed around for OpenAI access, that workaround is gone. If you picked Azure specifically for OpenAI, you have a real alternative for the first time. Multi-cloud routing for frontier models is table stakes now — expect inference prices to drop and cross-cloud model-parity to start mattering in vendor evaluations.

Then both labs made a stranger move. Anthropic and OpenAI launched enterprise consulting arms on the same day, aimed at different markets. Anthropic’s $1.5B venture embeds Claude engineers inside customer companies in a McKinsey-style model — advise, implement, bundle the work. OpenAI’s $10B “Deployment Company” follows the Palantir playbook instead — forward-deployed engineers writing custom systems on-site, the way Palantir embeds with banks and governments.

Two signals for practitioners.

First, neither lab believes API access alone defends revenue anymore — they’re building services moats around the models.

Second, if your team does complex deployment work for clients, you’re now competing with the model vendors themselves. A lab engineer with internals-level model knowledge embedded at your customer’s site is a different threat than a cheaper API.

One earnings number worth carrying through: all three major clouds reported AI demand outrunning capacity, with Pichai calling Google Cloud demand “capacity-constrained.” If you’re planning training runs or large-batch inference, supply is the bottleneck — book GPU capacity early.

The week in order:

Monday: Microsoft–OpenAI partnership restructured

Tuesday: OpenAI ships on Bedrock

Sunday: both leading labs launch consulting practices

The partnership era — labs needing clouds for compute, clouds needing labs for models — is over. Models are commoditizing. Differentiation is moving to the deployment layer.

Models That Dropped This Week

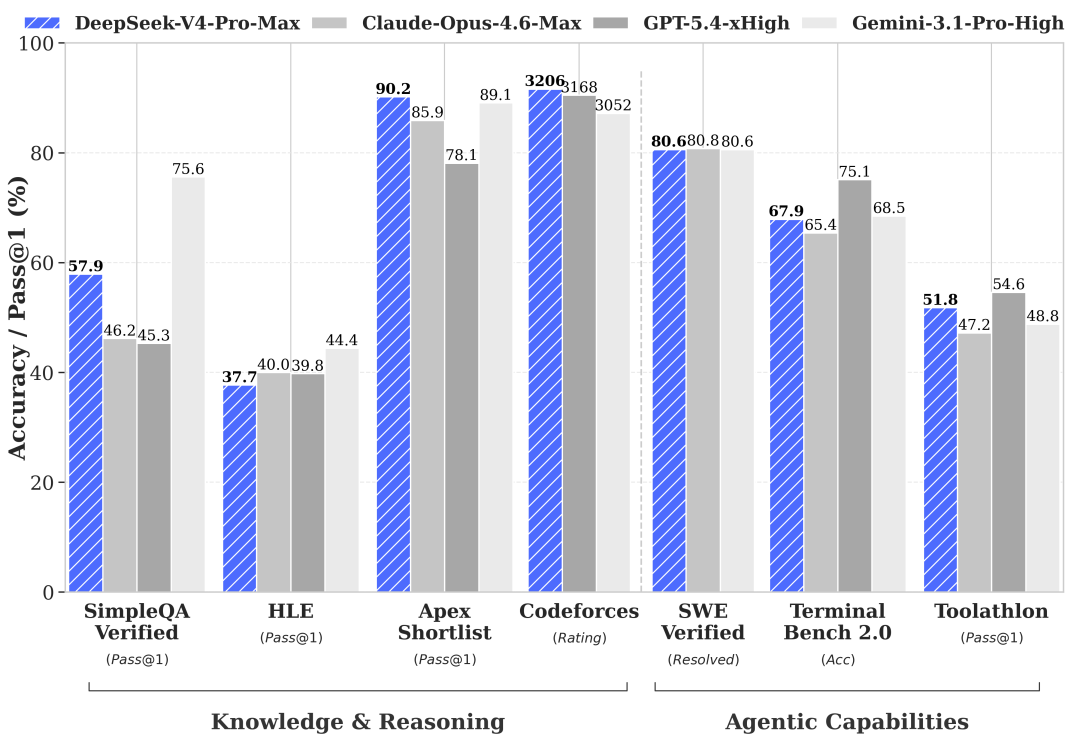

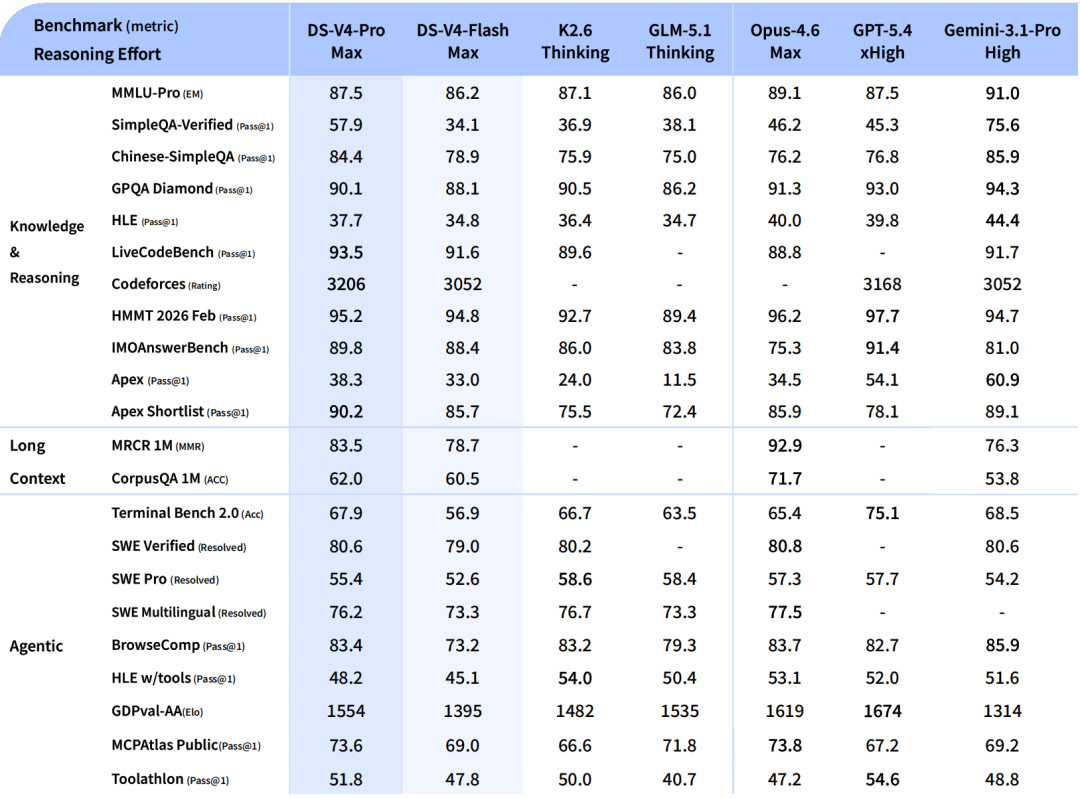

DeepSeek V4 Pro & Flash — The model that dominated every benchmark conversation this week.

V4 Pro: 1.6T parameters (49B active, MoE), million-token context, MIT license.

V4 Flash: 284B total, 13B active. LiveCodeBench 93.5, Codeforces 3206 — and it costs roughly one-seventh of Claude Opus 4.7.

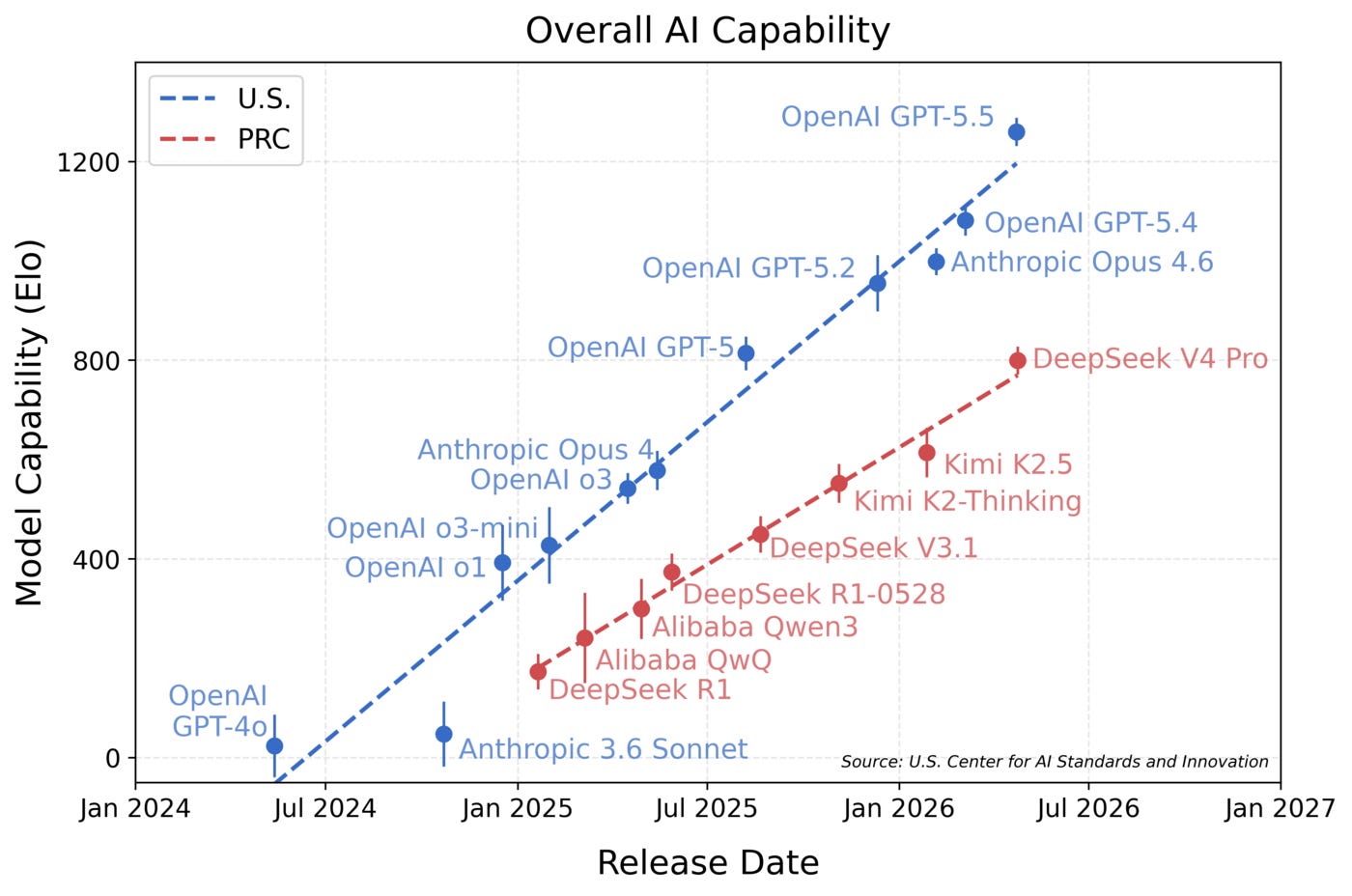

NIST’s CAISI evaluation says it lags US frontier by about 8 months. At that price-to-performance ratio, the gap is okay as well. Part of a wave of four Chinese open-weight releases in 12 days — none costing more than a third of the Western frontier.

Kimi K2.6 (Moonshot AI, April 30 — trending May 2-3) — A 1T-parameter MoE model (32B active) that won the AI Coding Contest with a 7-1-0 record, beating GPT-5.5, Claude Opus 4.7, and Gemini.

First open-weights model legitimately competitive on hard multi-file coding tasks. HLE with tools: 54.0% vs GPT-5.4’s 52.1%. SWE-Bench Pro: 58.6%. This is the one to benchmark against your local setup.

Mistral Medium 3.5 (Mistral, April 30) — The largest open-weight model from a European lab: 128B parameters on HuggingFace.

Fills the gap between 70B models that run on consumer hardware and the 400B+ frontier. For teams that need more capability than Llama 3.3 70B but can’t justify API costs, this is the new option.

Papers That Matter

TACHIOM: Efficient Multivector Retrieval with Token-Aware Clustering — ColBERT-style multi-vector retrieval has been “better but too slow” for production for years. TACHIOM changes the math:

247x faster clustering than k-means for centroid construction

Up to 9.8x retrieval speedup over SOTA on MS-MARCO and LoTTE

Maintains or improves quality despite the massive speedup

The trick: exploiting token-level structure that prior systems ignored, scoring documents using centroids alone without expensive token-level computation.

If you benchmarked ColBERT against single-vector HNSW last year and concluded it was too expensive, rerun that benchmark with TACHIOM.

NuggetIndex: Governed Atomic Retrieval for Maintainable RAG — Every production RAG system has a staleness problem nobody wants to talk about. You update the source docs, re-embed, and cross your fingers.

NuggetIndex stores atomic information units (”nuggets”) as managed records with temporal validity intervals and lifecycle states — active, deprecated, superseded. The results:

42% improvement in nugget recall

55% reduction in fact conflicts

64% reduction in generator input length

Some Good Reads

Democratizing Machine Learning at Netflix: Building the Model Lifecycle Graph (Netflix Engineering) — Netflix tackles a problem every large ML org hits: your source systems don’t know about entities in other domains.

The Model Lifecycle Graph discovers and materializes cross-system relationships, giving teams visibility into how models, features, datasets, and experiments connect. If you’ve ever asked “which models break if I change this feature?” — this is the architecture to study.

From Clicks to Conversions: Shopping Conversion Candidate Generation at Pinterest (Pinterest Engineering) — Most recommendation systems optimize for clicks. Pinterest rebuilt their entire candidate generation pipeline to optimize for actual purchase conversions.

Different features, different training signals, different retrieval strategies. The shift from engagement to conversion is “obvious in hindsight” but requires rethinking everything. For any RecSys team where downstream conversion matters more than clicks, this is the reference.

What This Week Was Really About

This was the week the AI industry’s first-generation agreements expired — all of them, all at once.

Every assumption from the first wave is being renegotiated.

The market told you exactly who’s winning. Google surged 10% in a single session — its best day in years — because Wall Street sees the full-stack AI company converting capex into revenue.

And then there’s the Chinese open-weight wave. Four labs released frontier-adjacent models in 12 days — DeepSeek V4, Kimi K2.6, GLM-5.1, MiniMax M2.7 — none costing more than a third of Claude Opus 4.7. All at roughly the same capability ceiling.

The “open models can’t compete” narrative is dead.

The question now is whether Western frontier labs can differentiate on capability fast enough to justify the price premium. This week, both Anthropic and OpenAI answered by pivoting toward services.

When your model becomes a commodity, sell the deployment.

Quick Hits

Musk admitted under oath that xAI trained Grok on OpenAI’s models — model distillation confirmed in sworn testimony, and it may be more consequential than the trial itself if OpenAI pursues a separate claim.

Anthropic eyes a $50B raise at up to $900B valuation while ChatGPT uninstalls surged 132% YoY and Claude downloads grew 11x — the consumer AI market is more competitive than anyone expected.

The Academy Awards banned AI-generated acting and writing — producers must sign an “Affidavit of Human Origin” starting with the 99th Oscars.