MLWhiz Weekly Recsys/ML/GenAI Newsletter # 6

The week the AI found curl vulnerabilities and the developers discussed AI usage while coding

Hey, Rahul here! 👋 Each week, I publish long-form ML+AI posts covering ML, AI, and System design for MLwhiz. Paid subscribers also get how-to guides with full code walkthroughs. I publish occasional extra articles. If you’d like to become a paid subscriber, here’s a button for that:

I love keeping track of everything week to week — here's what happened this week. Enjoy this free weekly post! For those who want to dive deeper into any of these topics, that's what my paid posts are for.

🏆 Story of the Week: The AI Cybersecurity Arms Race Goes Live

Two weeks ago, Anthropic’s Mythos found 271 vulnerabilities in Firefox with near-zero false positives. Impressive. This week, the story went one step forward.

It started when Daniel Stenberg confirmed that Mythos had found a vulnerability in curl, software installed on virtually every internet-connected device on Earth. 28 years of human auditing missed it. That’s crazy

Then, OpenAI launched Daybreak, its direct competitor to Mythos for automated vulnerability discovery. In one move, AI security auditing became a head-to-head race.

While finding vulnerabilities in one's own products and fixing them is great for defense, the same tools in adversarial hands don’t find and fix vulnerabilities — they find and exploit them.

Palo Alto’s CEO, Nikesh Arora, recently warned that organizations have 3-5 months before attackers broadly access these same capabilities. Amateur attackers can point an AI at your attack surface and get working exploits back.

This particularly is important as it brings to notice the issues with dubious entities exploiting vulnerabilities in systems, and this could mean any system → Banking, Finance, Healthcare, you name it. The effects could be disastrous to say the least.

🤖 Models That Dropped This Week

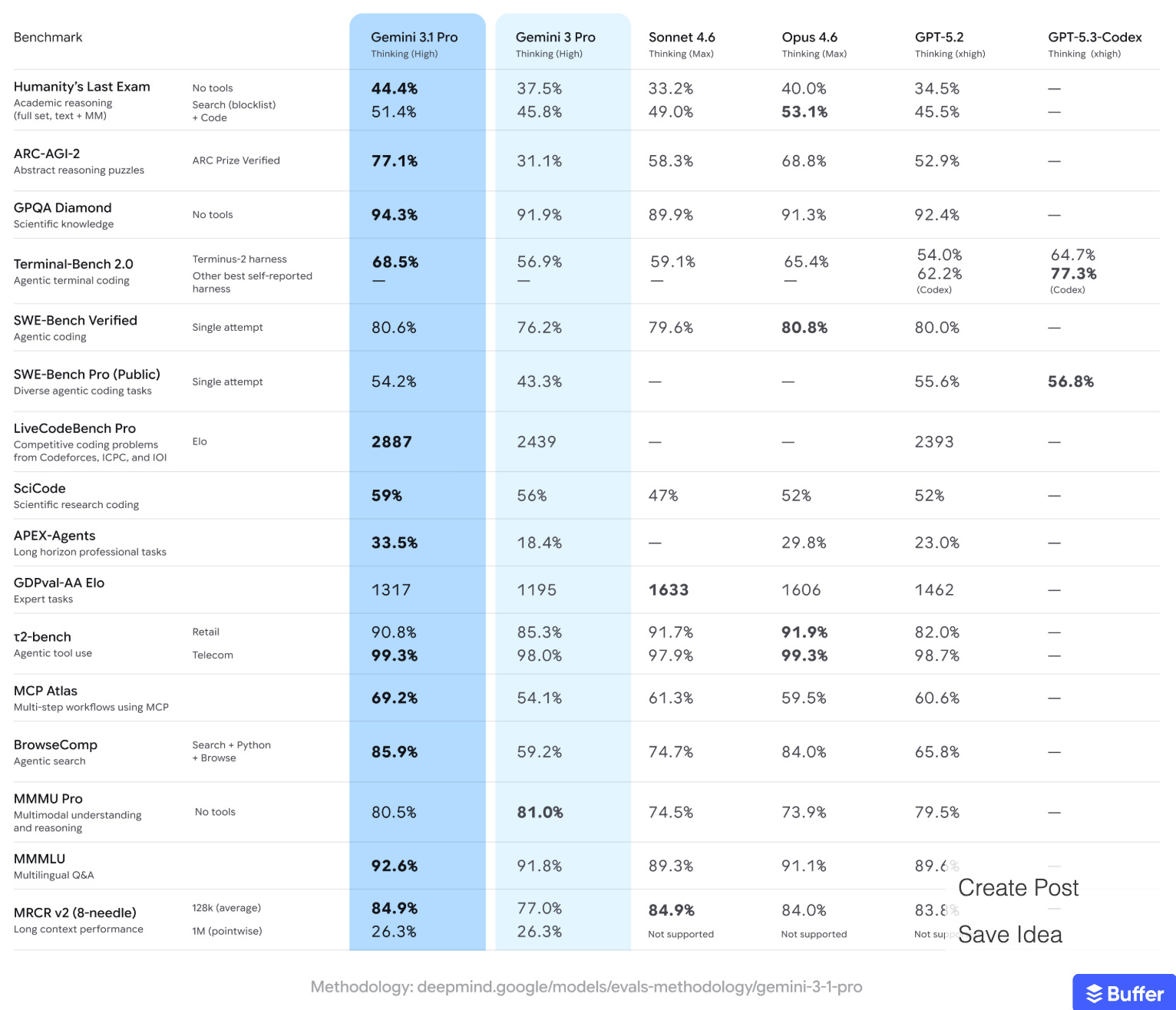

Gemini 3.1 Pro + Flash-Lite (Google, May 11) — Google shipped Gemini 3.1 Pro with a 1M token context window and a 77.1% score on ARC-AGI-2, a benchmark that measures genuine reasoning over pattern matching. Flash-Lite went GA at $0.25/million tokens, making it the cheapest frontier-class inference available.

I really like the SVG generation capability of the Pro model. I tried to generate an SVG logo of MLWhiz, and I got the following. The top video is by Gemini Pro 3.1, the second one is from Claude 4.7 opus. The thing I noticed is that, using the same prompt, Gemini took 1/10 of the time that Claude took. I am honestly confused between which is better, though. Try them both out.

Needle (Cactus Compute, May 12) — Gemini’s tool-calling capability distilled into a 26M parameter model — small enough to run on virtually any device. If you need agentic tool use on edge devices or embedded systems, this is a pretty good solution.

ZAYA1-8B (Zyphra, May 9) — A reasoning-focused MoE model with only 700M active parameters out of 8B total, built on Zyphra’s MoE++ architecture. It matches or exceeds DeepSeek-R1-0528 on math and coding benchmarks while using a fraction of the inference compute. Trained entirely on AMD hardware — proving AMD viability for frontier training, not just serving.

Daybreak (OpenAI, May 12) — OpenAI’s entry into AI security tooling, an agent designed to compete directly with Anthropic’s Mythos for automated vulnerability discovery in production codebases. The launch validates AI security auditing as a real product category. Timing follows Mythos’s high-profile Firefox and curl finds.

🧠 Papers That Matter

SIRA: Compressing Multi-Round Agentic Search Into a Single Retrieval Call — Last week, Microsoft’s AgenticRAG showed 5.9x improvement from making retrieval iterative. This week, a paper from Rice and Facebook Research asks the opposite question: can you get the same quality without iteration?

The answer is yes. SIRA enriches documents offline with missing search vocabulary and expands queries with evidence-discriminating terms, then delivers everything via a single weighted BM25 call.

The insight is that instead of making retrieval smarter at query time (as agents do), make the documents smarter at index time. If you’re building a search or RAG, try this before you add an agent loop.

📝 Some Good Reads

“From Vibe Coding to Agentic Engineering” (Andrej Karpathy) — One year after coining “vibe coding,” Karpathy argues the more serious discipline emerging is agentic engineering — and the distinction matters. I really liked this quote: “You can outsource your thinking, but you can’t outsource your understanding.”

“Agents Need Control Flow, Not More Prompts” (Bear Blog) — The most-discussed technical post of the week on Hacker News. The argument: the agent ecosystem is over-indexed on prompt engineering and under-indexed on deterministic control flow, state machines, and proper orchestration.

💡 What This Week Was Really About

Three stories dominated, and they’re all about the same thing: AI’s capabilities are outrunning every institution designed to manage them.

Start with cybersecurity. The defense side and the attack side are scaling simultaneously, at AI speed. I don’t think most teams have updated their threat models for this world.

Then there’s the question of coding adoption and its effects. Uber burned its entire 2026 AI budget in four months because 84% of engineers adopted Claude Code, and 70% of committed code is now AI-generated.

Meanwhile, the dark side showed up at Amazon. Ars Technica reported that employees are tokenmaxxing to hit internal AI usage metrics — generating unnecessary tokens to satisfy management mandates rather than deriving genuine productivity gains.

Meanwhile developers are discussing I’m Going Back to Writing Code by Hand. The argument isn't anti-tool — it's anti-dependence. When the AI writes the code, you stop building the mental model of how the system works. You become a reviewer of code you didn't design. Over time, your ability to design atrophies. And who wants that!!!

Developers are also discussing, “If AI Writes Your Code, Why Use Python?” The angle is that Python won because humans read code more than they write it. But if Claude is doing both the reading and the writing, maybe we should target languages with better type systems, better performance, and better compiler guarantees — and let the AI handle the ergonomics. It asks the right question: what does “developer experience” mean when the developer is an LLM?

Three threads, one shape. Security built around human-speed adversaries, coding workflows built around human authorship, language design built around human readability. All three assumptions are breaking at once.

Meanwhile, whatever shakes out, my own plan is simple: use AI aggressively for code I understand and could write myself, cautiously for code that’s teaching me something new. At a minimum, I’d like to keep my brain from atrophying.

⚡ Quick Hits

Anthropic secures 220,000 GPUs via SpaceX and eyes $1T valuation — the largest single compute partnership in AI history, with Claude Code revenue alone reportedly topping $2.5B.

Uber burned its entire 2026 AI budget in four months — 84% of engineers are agentic coding users, 70% of committed code is now AI-generated, and per-engineer monthly API costs hit $500–$2,000.

Big Tech passes 100,000 jobs shed in 2026 — LinkedIn (900), Cisco (4,000), Cloudflare (1,100), Meta (8,000) add to the total while collectively guiding to $725B in combined AI CapEx.

Google in talks with SpaceX for orbital data centers — “Project Suncatcher” plans 81 TPU-equipped satellites with 10 Tbps laser links, because terrestrial power constraints have delayed or canceled half of 2026’s planned data centers.