From RNNs to Transformers: Building Sequential Recommenders (Part 1)

RecSys Series Part 9a: Implementing GRU4Rec and SASRec on Steam Games — with production deployment patterns

Hey, Rahul here! 👋 Each week, I publish long-form ML+AI posts covering ML, AI, and System design for MLwhiz. Paid subscribers also get how-to guides with full code walkthroughs. I publish occasional extra articles. If you’d like to become a paid subscriber, here’s a button for that:

Every tech revolution follows the same pattern. First, we solve the problem one way. Then, we realize we’ve been solving the wrong problem.

Natural language processing: we spent a decade on classification (sentiment, NER, QA as pick-the-right-answer).

Then GPT said: generation subsumes classification. Just generate the output.

Recommendation systems are having their moment right now. We spent years building the pipeline that would retrieve 10K candidates with Two-Tower, score 1K with a ranker, re-rank the top 100. But what if the recommender could just generate the next item directly? That’s where this series is headed.

But you can’t understand the generative revolution without understanding what came before it.

In this two-part post, we’ll trace the full evolution: how RNNs first cracked sequential recommendation, how Transformers took over, and ultimately how generative models are rewriting the rules entirely.

This is Part 1 — covering

GRU4Rec (2016),

SASRec (2018),

the BERT4Rec controversy, and

production deployment patterns.

Part 2 will cover Semantic IDs, TIGER, HSTU, and who’s deploying generative recommenders in production today.

Let’s dive in!

1. The Sequential Problem: Why Order Matters

Let’s say that you just finished watching Inception. Netflix recommends Interstellar. You watch it. Next up: Arrival.

As you can see this watching order is not random. This is not just “you like sci-fi” and so are watching sci-fi movies.

There’s a trajectory here. The recommender is following your path through Christopher Nolan’s mind-bending sci-fi catalog — what you watched second might change what you should see third.

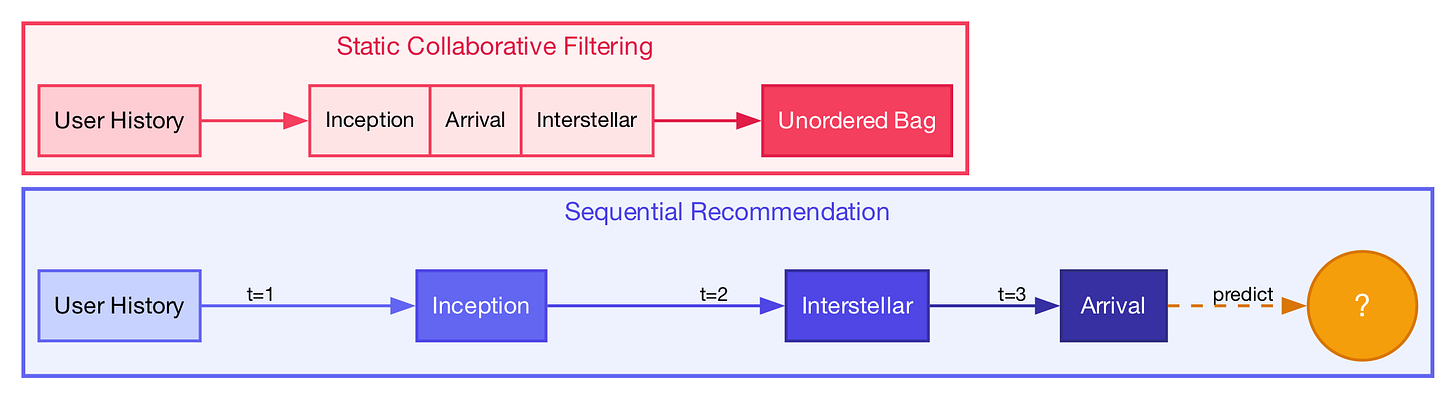

In the first post of this series, we covered collaborative filtering, which treats user history as an unordered matrix — a bag of items. So, if you watched [Inception, Interstellar, Arrival], traditional CF treats that the same as [Arrival, Inception, Interstellar]. But the order you watched them in tells you something completely different about what to recommend next.

Sequential models fixed that. They learned to predict not just “what you might like” but “what comes next.”

Formalising the Problem

Given a sequence of items a user has interacted with:

[i₁, i₂, i₃, ..., iₙ]

Predict the next item: iₙ₊₁

This formulation applies across domains:

E-commerce: product browsing → purchase prediction

Streaming: watch history → next video

Music: listening sequence → next song

News: reading pattern → next article

The Benchmark: Steam Games Dataset

For this post, we’ll use the Steam Games dataset — a rich gaming interaction dataset from UCSD’s repository for building our models:

67,287 users (raw) → 56,808 users (after 5-core filtering)

32,133 games (raw) → 6,382 games (after 5-core filtering)

2,235,453 interactions (playtime > 1 hour)

Average sequence length: 39.4 games (median: 26)

5-core filtering is a technique that removes all users and items with fewer than 5 interactions, applied iteratively until every remaining user and item has at least 5. It’s a standard preprocessing step in RecSys research to eliminate extreme cold-start cases (users who tried one game, games nobody played) that add noise without enough signal to learn from.

Now let’s see how different architectures tackle sequential prediction.

2. GRU4Rec — When RNNs Met Recommendations (2016)

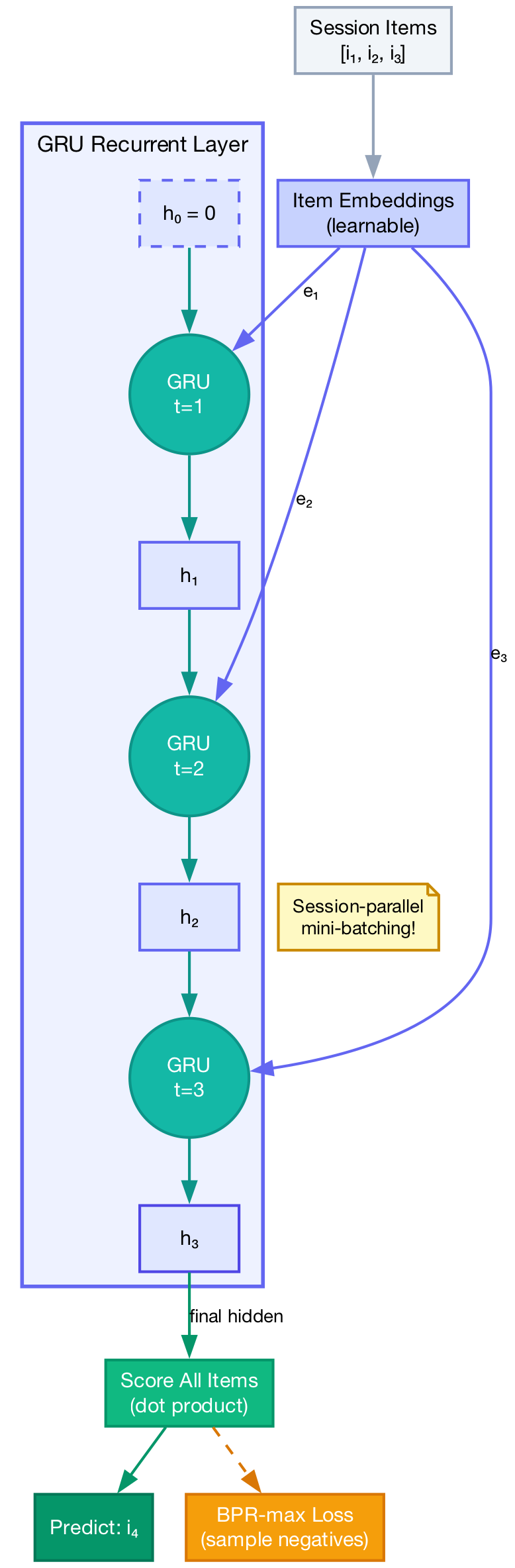

In 2016, Gravity R&D published “Session-based Recommendations with Recurrent Neural Networks” at ICLR. First major work applying RNNs to sequential recommendation. It dominated the field for nearly two years.