3 Modern Approaches to Solving Cold Start in RecSys

RecSys Series: Contextual bandits, meta-learning, and LLMs — how Spotify, TikTok, and YouTube handle new users and items

A user signs up for your streaming platform. They’ve never watched anything. They’ve never rated anything. They’ve never even scrolled. And your recommendation engine — the same engine that serves 200 million personalized feeds per day — stares at this blank profile and essentially says: “I have no idea who you are.”

This is the Cold Start Problem, and I’ve been fighting it for the better part of four years — at Meta, where new creators needed to find their audience from day one, and in the streaming world, where every new user expects a personalized experience the moment they log in. It’s the problem that’s been discussed on HackerNews since 2010, has a 400-page book written about it (Andrew Chen’s The Cold Start Problem), and STILL doesn’t have a clean answer.

This is the next installment in my RecSys series. We’ve covered the algorithmic evolution of recommendation systems, built two-tower retrieval from scratch, dissected the ranking layer — all assuming we have user data to work with. Today we drop that assumption entirely.

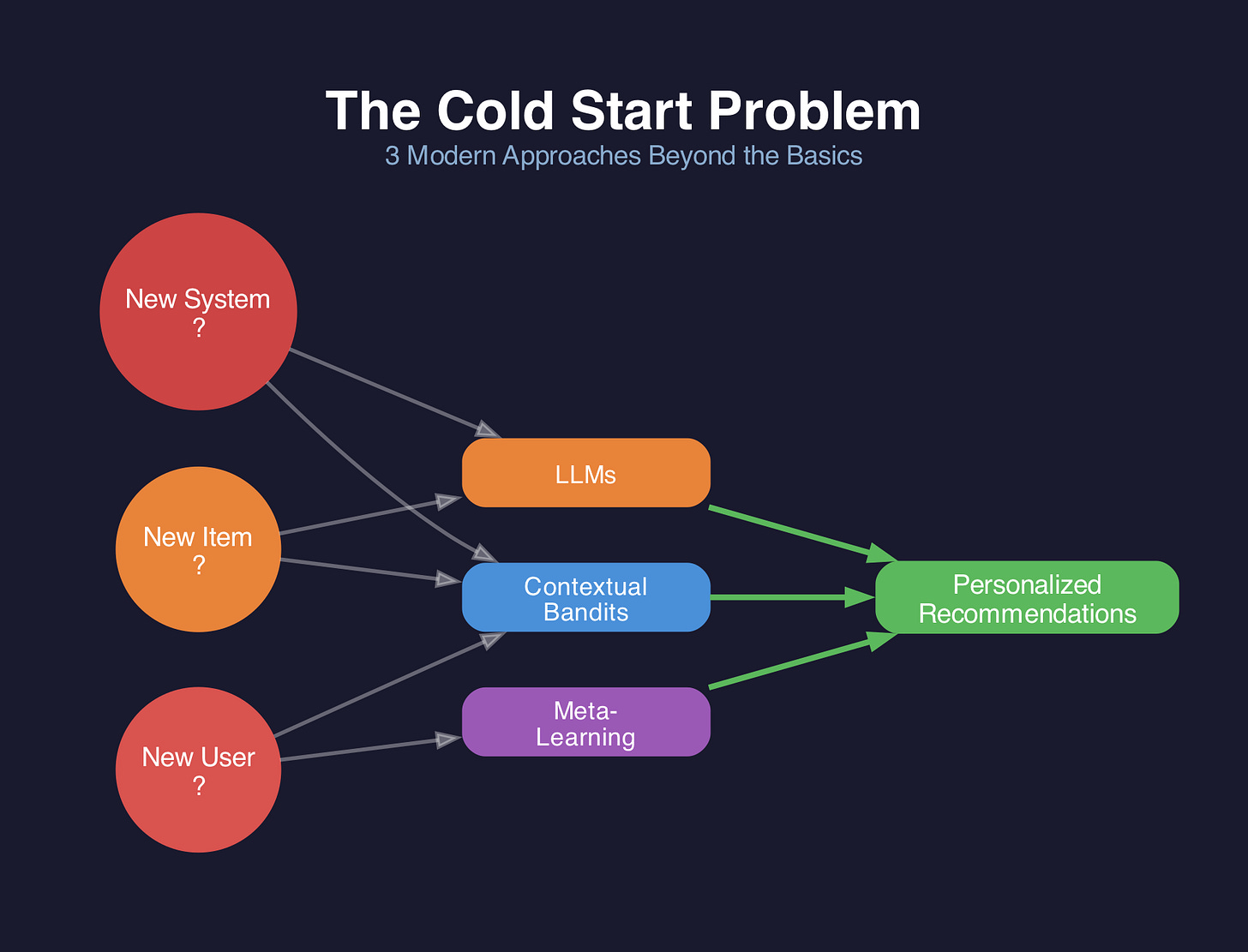

Here’s what we’ll cover:

The 3 types of cold start — they’re different problems with different solutions

Classical approaches — the baselines everyone ships first, and where they hit a ceiling

3 modern frontiers: contextual bandits, meta-learning (MAML, prototypical networks, CMML), and LLMs (feature extraction, reasoning, data generation)

How Spotify, TikTok, and YouTube actually solve this in production — with specific engineering details

A decision framework — so you know which approach fits your system, your data, and your budget

This is meant to be the definitive practitioner’s guide. Let’s dive in!

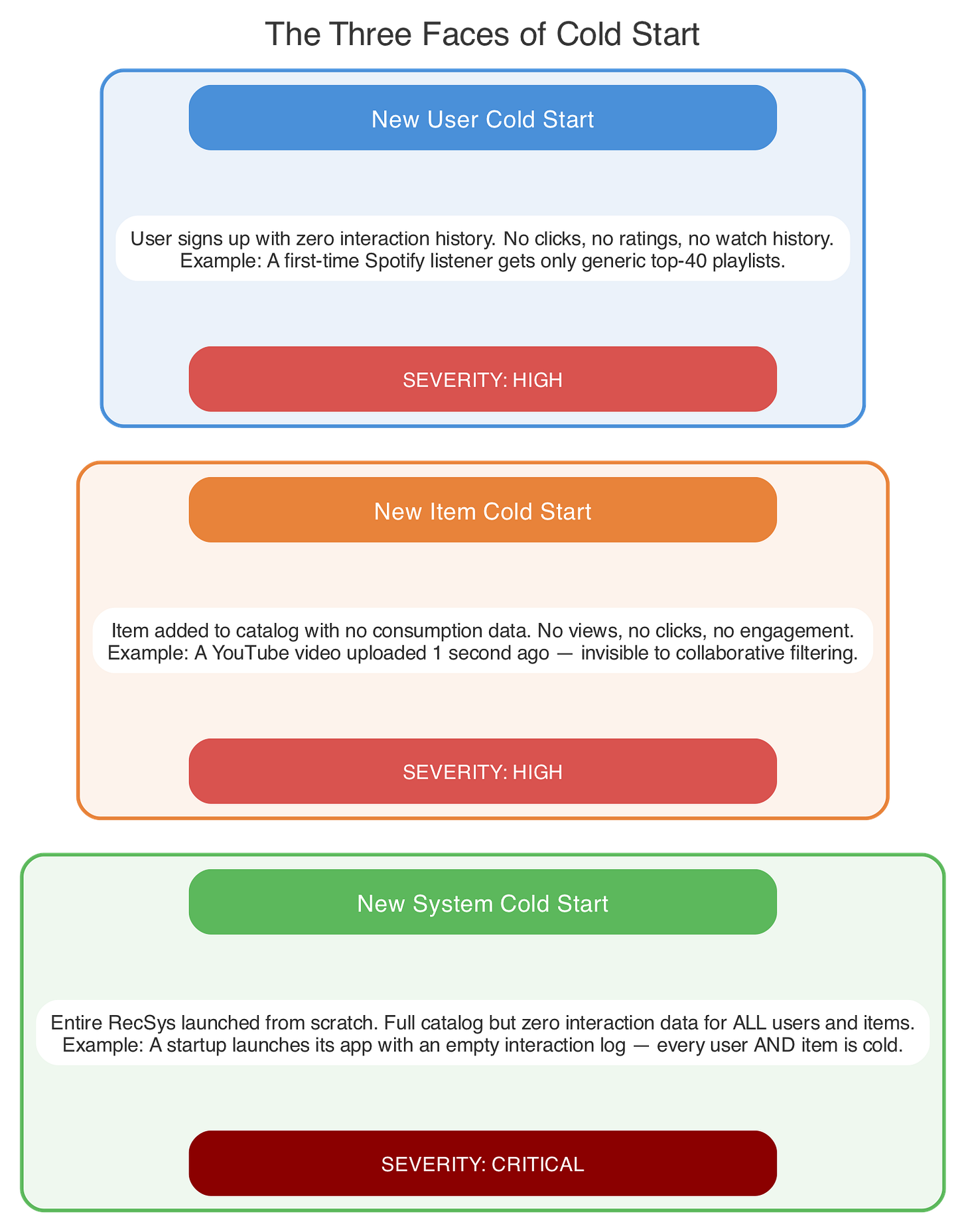

The Three Faces of Cold Start

Before we jump into solutions, let’s be precise about what we’re solving. “Cold start” isn’t one problem — it’s three distinct problems, and confusing them is one of the most common mistakes I see engineers make.

New User Cold Start

A user signs up. Zero watch history. Zero ratings. Zero clicks. Your collaborative filtering model — the one that works beautifully for your 50 million existing users — is completely blind. It relies on the user-item interaction matrix R where entry R(u, i) represents user u‘s interaction with item i. For a new user u_new, the entire row R(u_new, :) is empty — a zero vector.

This means every technique that depends on finding similar users (nearest-neighbor CF), decomposing the interaction matrix (matrix factorization), or learning user embeddings from behavior (deep learning approaches) — the entire algorithmic evolution we covered in Part 2 of this series — has literally nothing to work with. The new user is a point with no coordinates in preference space.

Mathematically, collaborative filtering predicts a rating as:

r̂(u, i) = μ + q_i^T · p_u

where p_u is the user’s latent factor vector and q_i is the item’s latent factor vector (Koren et al., 2009, “Matrix Factorization Techniques for Recommender Systems”, IEEE Computer). For a new user, p_u is undefined — there are no interactions to learn it from. You can initialize it randomly, but then your predictions are random noise.

New Item Cold Start

A video gets uploaded. A product gets listed. A song gets released. No one has interacted with it yet. Even if your model is phenomenal at scoring items with behavioral data, this item has zero behavioral signal — no clicks, no watches, no purchases. Its column R(:, i_new) in the interaction matrix is all zeros.

This creates a vicious cycle that I’ve seen destroy content platforms: the item is invisible to your retrieval-ranking pipeline because it has no engagement data. Because it’s invisible, it gets no exposure. Because it gets no exposure, it accumulates no engagement data. The item is trapped in a black hole of non-existence.

This isn’t an abstract concern — it directly affects creator retention on any content platform. If a creator uploads a show and it gets zero impressions for a week, that creator doesn’t come back. And losing the creator means losing not just that show, but everything they would have made in the future.

New System Cold Start

You’re launching a recommendation system from scratch. No users with behavioral data, no items with engagement history, no interaction matrix at all. R is entirely empty. This is the rarest variant, but it’s also the one that every startup and every new product line faces.

Here’s the uncomfortable truth that most blog posts skip: in production, the new item problem is often harder and more damaging than the new user problem. New users at least have some context you can exploit (device, location, time). New items have nothing but their own metadata. And the business cost of item-side cold start — creator churn, catalog invisibility, content deserts — compounds far faster than user-side cold start.

There’s also a regime between cold and warm that’s arguably even more important in practice: warm start — when you have 1-5 interactions. Not zero, but not enough for your models to be confident. This is where your system spends most of its time for the long tail of users and items, and it’s where the modern approaches we’ll cover really shine.

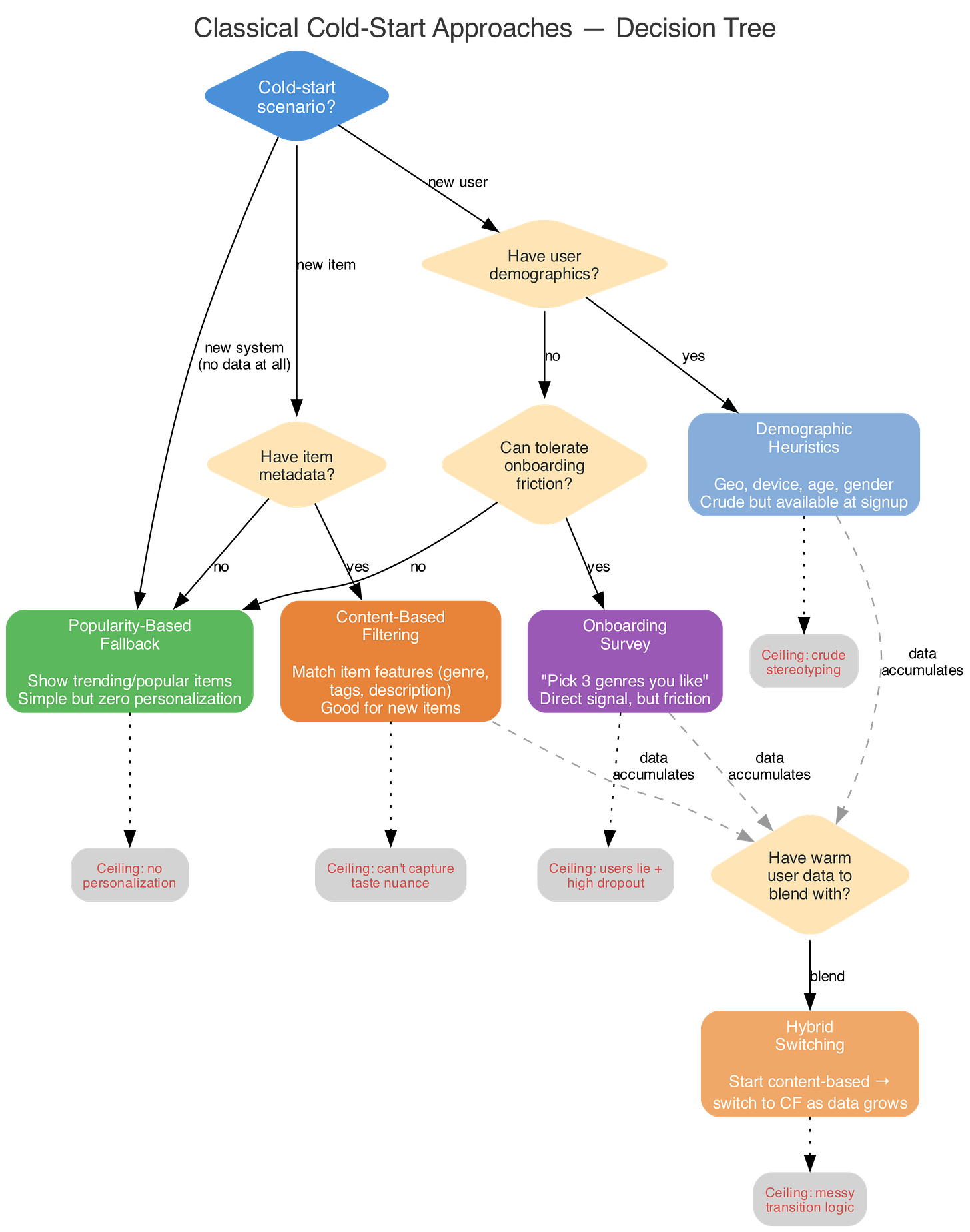

Classical Solutions (And Why They’re Not Enough)

Every recommendation system starts here. These are the baseline approaches — they work, they ship fast, and they’re better than showing nothing. But they all hit a ceiling, and understanding exactly where that ceiling is tells you when to invest in the modern approaches.

Popularity-Based Ranking

The simplest possible move: show new users whatever is trending right now. It’s the “most popular dish” approach — safe, zero personalization required, trivial to implement. You’re essentially replacing the personalized score r̂(u, i) with the global popularity score:

score(i) = Σ_u R(u, i) or score(i) = count(clicks on i in last 24h)

The obvious problem: you’ll never discover that this specific user hates action movies and loves documentaries. Everyone gets the same feed, and you learn nothing about individual preferences. It also creates a rich-get-richer feedback loop — popular items get shown more, get more clicks, become more popular. This is the Matthew Effect in recommendation systems(rich get richer), and it’s brutal for new content.

That said, popularity-based ranking has one underappreciated strength: it surfaces items that are currently relevant. A user might not know they want to watch the Oscar-nominated film that just released, but a time-decayed popularity score will surface it naturally.

Content-Based Fallback

Instead of using behavioral signals (which don’t exist for cold-start entities), use the item’s features directly. A movie’s genre, director, cast, plot keywords, runtime, year, language — these are all available from day one, before anyone watches it.

The basic approach: represent each item as a feature vector f_i (using TF-IDF, one-hot encoding, or pretrained embeddings), represent the user as a weighted average of the features of items they’ve interacted with:

p_u = (1/|I_u|) Σ_{i ∈ I_u} w_i · f_i

where I_u are the items user interacted with

w_i is weight for item (can be 1 for basic cases, or can be a time decay)

Then score new items by cosine similarity:

score(u, i) = cos(p_u, f_i) = (p_u · f_i) / (||p_u|| · ||f_i||)

This is exactly how content-based and collaborative filtering differ at their core. Content-based doesn’t need the interaction matrix — it needs item features and at least some user signal.

The catch: this works far better for new items than for new users. A new item has features you can compute similarity against. A new user with zero interactions has no profile vector p_u at all — you can’t compute an average of an empty set. You’d need at least one click to get started.

Demographic Heuristics

Use whatever you get at signup: geo-location, device type, language, age bracket, operating system. A user signing up from Tokyo on an iPhone at 11 PM likely has different preferences than someone from Texas on a smart TV at 2 PM on a Saturday.

Formally, you’re replacing the missing behavioral profile with a demographic profile:

p_u = g(demographics_u)

where g is a learned function (often a simple lookup table or a shallow neural network) that maps demographic features to the same embedding space as your warm users. You train g on your existing warm users — learning, for example, that users aged 25-34 in urban Japan tend to prefer anime and J-drama.

The obvious limitation: demographics are a coarse proxy for user preferences. Not every 30-year-old in Tokyo likes the same content. You’re fighting the stereotype problem — making assumptions about individuals based on group statistics. But in the zero-data regime, coarse is better than random.

Onboarding Surveys

“Choose 3 genres you like.” “Rate these 5 movies.” “Pick your favorite artists.” Direct, explicit preference signal that bypasses the cold-start chicken-and-egg entirely.

The catch? Every additional question increases signup friction and hurts conversion. Research from the Baymard Institute shows that each additional step beyond 3-4 in a signup flow increases abandonment significantly — and streaming onboarding is no exception. And users lie — or more precisely, they pick aspirationally rather than truthfully. (A user saying ”Yes, I definitely want to watch cerebral documentaries about climate change” might proceed to binge Love Island for 6 hours.)

There’s a rich literature on optimal onboarding question selection. Golbandi et al. (2011, “Adaptive Bootstrapping of Recommender Systems Using Decision Trees”, WSDM) showed you can use decision trees to pick the maximally informative items to show in an onboarding survey — items where the user’s response tells you the most about their latent preferences.

Hybrid Switching

The textbook answer: start with content-based or popularity-based recommendations, gradually switch to collaborative filtering as behavioral data accumulates. Formally:

r̂(u, i) = α(u) · r̂_PB(u, i) + (1 - α(u)) · r̂_CF(u, i)

where α(u) is a function of how much data you have for user u — close to 1 for cold users (trust popularity-based), close to 0 for warm users (trust collaborative filtering).

Sounds clean in a blog post but it could be incredibly messy in production. Here’s why:

The blending weight α(u) needs a functional form. Is it a step function (hard cutover at 10 interactions)? Sigmoid? Linear ramp? Each choice creates different user experiences, and there’s no universal right answer — you have to tune it per domain.

Score calibration is a nightmare. Content-based or popularity scores and CF scores live on completely different scales and distributions. Naively adding them produces garbage — you need score normalization (min-max? z-score? rank-based?) that itself requires careful calibration.

The transition can jar users. A user at α=0.6 today and α=0.3 tomorrow might see a completely different feed. Without smoothing, users experience sudden recommendation “personality shifts” that erode trust.

You’re running two serving pipelines. Two models to train, two feature stores to maintain, two sets of latency budgets. The operational complexity of production recommendation systems is one of the most underappreciated challenges — and hybrid switching doubles it.

The Ceiling

All of these classical approaches are basically guessing. Educated guessing, sure — but still guessing. They don’t actively try to learn about the user. They wait passively for data to trickle in and hope the user sticks around long enough. That’s fine for day one, maybe week one. But if your cold-start strategy is still “show popular stuff and pray” after a month, you’re leaving massive value on the table.

Here’s a simple Python sketch of the popularity + content-based fallback:

import numpy as np

from sklearn.metrics.pairwise import cosine_similarity

def cold_start_recommend(user, items, item_features, popular_items, n=10):

“”“Simple cold-start fallback: content-based if we have ANY

signal, popularity otherwise.”“”

if user.interactions: # even 1 click gives us something

# Build user profile from interacted item features

profile = np.mean(

[item_features[i] for i in user.interactions], axis=0

)

scores = cosine_similarity([profile], item_features)[0]

top_items = np.argsort(scores)[::-1][:n]

return [items[i] for i in top_items]

# Zero interactions → fall back to popularity

return popular_items[:n]

This is fine for day one. But the three modern approaches that follow are what separate a good recommendation system from a great one.

The rest of this post covers the three modern frontiers — contextual bandits, meta-learning, and LLMs — plus how Spotify, TikTok, and YouTube solve cold start in production, and a decision framework for choosing the right approach. Subscribe to continue reading.