Your Ranking Model Is Right. Your Recommendations Are Wrong

RecSys Series Part 8: How diversity, freshness, and business constraints turn a ranked list into a product-ready feed

Hey, Rahul here! 👋 Each week, I publish long-form ML+AI posts covering ML, AI, and System design for MLwhiz. Paid subscribers also get how-to guides with full code walkthroughs. I publish occasional extra articles. If you’d like to become a paid subscriber, here’s a button for that:

Here’s something they don’t teach you in ML courses:

A perfectly relevant recommendation list is usually a terrible one.

You spend months training a ranking model. Features, architectures, multi-task objectives — the works. Then the product team walks in: “Can you make sure we don’t show 5 horror movies in a row? And boost new releases? Oh, and reserve slot 3 for promoted content.”

Each request costs you relevance. The question isn’t whether to spend — it’s how much.

Think of it as a budget. Your ranking model gives you relevance scores for every item. Re-ranking is the art of spending that relevance wisely — trading some accuracy for diversity, freshness, fairness, and business value.

A perfectly relevant recommendation list is usually a terrible one.

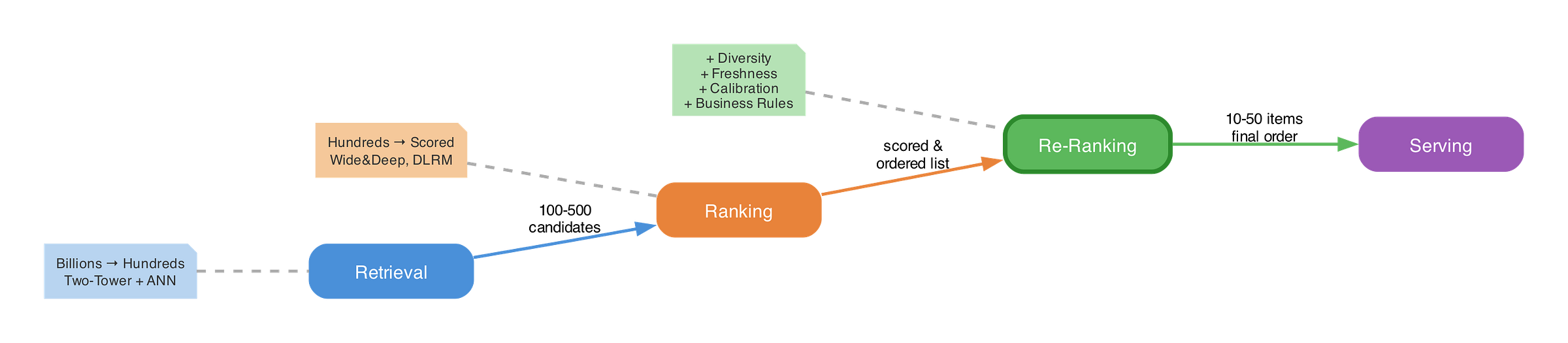

This is Part 8 of the RecSys for MLEs series. We’ve covered the fundamentals, the evolution from CF to deep learning, the 3-stage funnel where I first introduced re-ranking as the “business layer,” two-tower retrieval, vector search, the ranking layer, and the cold start problem.

Today, we’re opening up that final layer. Here’s what we’ll cover:

The Set Problem → Why sorting by relevance produces bad recommendations

Diversity → From dedup rules to Determinantal Point Processes (YouTube’s production system)

Calibration → Matching your recommendations to the user’s taste distribution

Freshness → Getting new content into the feed without wrecking relevance

Business Constraints → The product rules that shape the final feed

Multi-Objective Re-Ranking → Combining everything: scalarization, constraints, and 2D layouts

The Practitioner’s Playbook → When to use what, and the pitfalls that trip everyone up

Let’s dive in!

1. Why Re-Ranking Exists — The Set Problem

Your ranking model scores items independently. Item A gets 0.92. Item B gets 0.89. Item C gets 0.87. Sort descending. Done.

Except it’s not done. Because when you look at your top-10 list, items A, B, and C are all psychological thrillers from the same director. Items D through G are also thrillers. The model did exactly what you asked — it found the most relevant items. But the resulting set is terrible.

This is what I call the set problem: optimizing each item independently doesn’t optimize the set.

Here’s how to think about it. Ranking answers: “How relevant is this item to this user?” Re-ranking answers a harder question: “What’s the best collection of items to show this user?”

The input to re-ranking is typically 100-500 scored items from your ranker. The output is the final 10-50 items in their display order. And the constraints are everything your ranking model doesn’t know about: diversity requirements, content freshness, promotional obligations, fairness targets, and a dozen product-specific rules.

I remember a team meeting where someone pulled up our top-10 list for a test user: ten nearly identical sci-fi action movies. “The model is working perfectly,” someone said. Technically correct — and completely useless. The top-10 wasn’t a recommendation; it was a redundancy report.

Netflix does this at massive scale — 15,000+ shows, nearly 300 million users, and a homepage that needs to feel both personally relevant and excitingly diverse. Their page construction system doesn’t just rank shows; it considers the composition of each row and the relationships between rows.

Here’s the key mental model I want you to hold for this entire post: re-ranking is spending a relevance budget. Your ranking model gives you a relevance score for each item. That score is currency. Every diversity constraint, every freshness boost, every business rule costs some of that relevance. The art is deciding how much to spend on each.

Let’s look at the algorithms that make this possible.

2. Diversity — From Rules to Determinantal Point Processes

Diversity is the most visible re-ranking objective. When a user sees 10 items from the same genre, something has clearly gone wrong. But “add diversity” is easy to say and surprisingly hard to get right. Three levels of sophistication:

Level 1: Rule-Based Dedup

The simplest approach is just writing rules: - “No more than 2 items from the same category in the top 5” - “No two items from the same creator in a row” - “At least 1 item from ‘trending’ in top 3”

Before YouTube deployed their DPP system(we will talk about this), they used exactly these kinds of heuristics: fuzzy deduplication (removing items too similar to ones already selected) and sliding window constraints (at most n out of every m items from the same type).

Rules are fast, interpretable, and easy to debug. But they’re also brittle. They can’t capture nuanced notions of similarity — “these are both thrillers” is a rule; “these have similar emotional arcs” is not. And they compose badly: stack 5 rules on top of each other and you’ll find they frequently conflict.

Level 2: Maximal Marginal Relevance (MMR)

MMR is the first real algorithmic approach to diversity. It was originally proposed for document retrieval, but it maps perfectly to recommendations.

The idea is beautifully simple. Instead of selecting items by relevance alone, you select greedily: at each step, pick the item that best balances relevance with dissimilarity to items you’ve already selected.

import numpy as np

from sklearn.metrics.pairwise import cosine_similarity

def mmr_rerank(relevance_scores, item_embeddings, lambda_param=0.5, top_k=10):

“”“

Maximal Marginal Relevance re-ranking.

Args:

relevance_scores: array of shape (N,) — ranking model scores

item_embeddings: array of shape (N, d) — item feature vectors

lambda_param: trade-off between relevance (1.0) and diversity (0.0)

top_k: number of items to select

Returns:

selected: list of indices in selection order

“”“

n_items = len(relevance_scores)

sim_matrix = cosine_similarity(item_embeddings)

selected = []

candidates = list(range(n_items))

for _ in range(top_k):

best_score = -np.inf

best_idx = None

for idx in candidates:

# Relevance term

rel = relevance_scores[idx]

# Max similarity to any already-selected item

if selected:

max_sim = max(sim_matrix[idx][s] for s in selected)

else:

max_sim = 0

# MMR score: balance relevance vs. novelty

score = lambda_param * rel - (1 - lambda_param) * max_sim

if score > best_score:

best_score = score

best_idx = idx

selected.append(best_idx)

candidates.remove(best_idx)

return selectedThe lambda_param is your knob. At λ=1.0, MMR is pure relevance (no diversity). At λ=0.0, it’s pure diversity (ignores relevance). In practice, values between 0.5 and 0.7 work well.

MMR’s complexity is O(Nk) per selection, which is fast. But it has a fundamental limitation: it’s myopic. At each step, it only compares the candidate to items already selected. It never evaluates the global quality of the final set.

Level 3: Determinantal Point Processes (DPP)

This is where things get interesting.

A DPP is a probabilistic model that assigns higher probability to subsets of items that are both high-quality AND diverse. Unlike MMR’s pairwise comparisons, a DPP evaluates the entire subset at once.

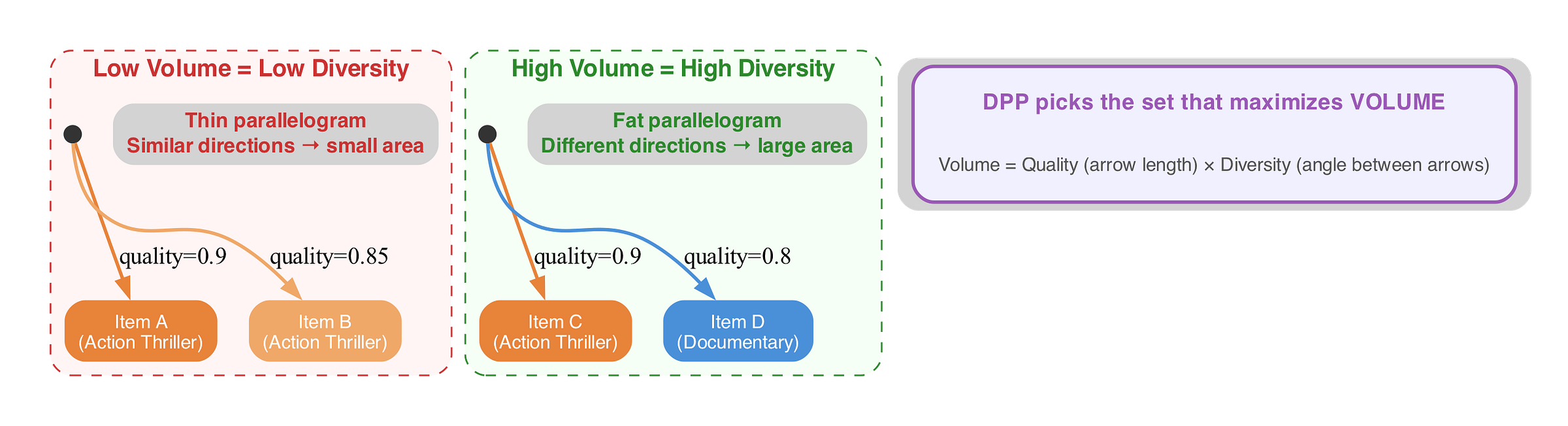

Here’s the intuition: Imagine each item as an arrow in a high-dimensional space. The arrow’s length represents quality (the ranking model’s score). The arrow’s direction represents the item’s characteristics (its embedding). A DPP selects the set of arrows that spans the maximum volume — you want arrows that are both long (high quality) AND point in different directions (diverse).

Mathematically, we define a kernel matrix L where each entry captures both quality and similarity:

L[i,j] = q_i × q_j × similarity(i,j)

where q_i is item i’s quality score (from your ranker) and similarity(i,j) is the cosine similarity between item embeddings. The probability of selecting a subset S is proportional to det(L_S) — the determinant of the submatrix formed by those items, which is exactly the volume of the parallelogram those item vectors span.

That’s abstract. Let me walk through it with three movies.